freepik[/caption]

freepik[/caption]Nawridho A. Dirwan – Research & Development Officer at Ruangobrol.id

Violent extremism poses a significant threat to global peace and security, as it can lead to acts of terrorism, radicalisation, and recruitment of individuals into extremist ideologies. Preventing and countering violent extremism (PCVE) is a complicated and diverse challenge that necessitates creative solutions. In recent years, artificial intelligence (AI) has emerged as a powerful tool in the fight against violent extremism. However, AI can be harnessed to help prevent and counter violent extremism by enhancing early detection and monitoring without discarding ethical considerations.

Early Detection

Early detection is one of the most essential features of PCVE. AI technologies can analyse massive volumes of data from various sources, including social media, online forums, and communication platforms, to identify signs of radicalisation and extremist propaganda. Machine learning algorithms can identify patterns, keywords, and sentiments indicative of extremist ideologies, allowing authorities to respond quickly.

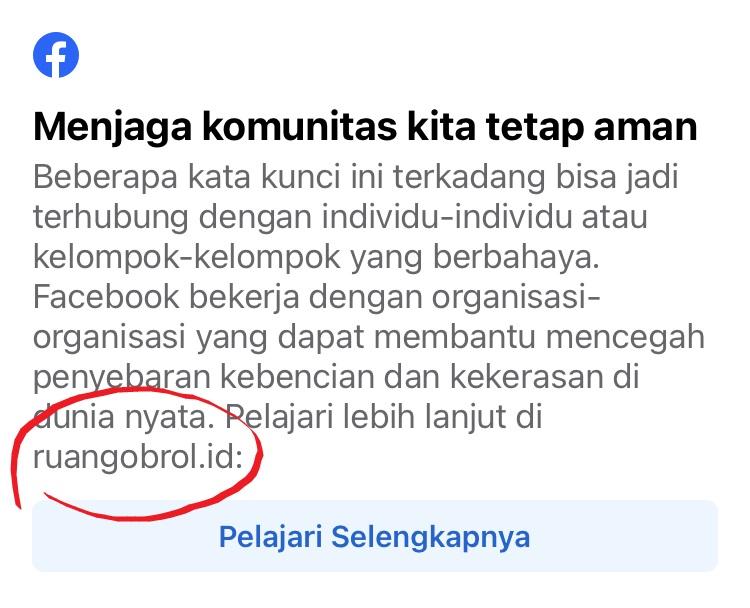

For example, The Redirect Initiative, which is part of Counterspeech project, was introduced by Facebook as an effort to prevent radicalisation in their platform by redirecting hate and violence-related search terms/keywords towards resources, education, and outreach groups that can help. In Indonesia, Facebook collaborated with Ruangobrol to compile the dangerous VE related term and redirect the search to ruangobrol's website.

Screenshot example of Redirect Initiative on Facebook

These related keywords prevent individuals from finding posts that may contain extremist narratives associated with the keywords. However, this group is learning and is beginning to overcome the keyword block by changing one or more letters into numbers, which are harder to track.

Visual Analysis

Furthermore, AI-powered image and video analysis can detect extremist symbols, signs, or propaganda materials, making monitoring and responding to threats easier. Tech companies like Facebook, Microsoft, Twitter, and YouTube have started an industry-led project called the Global Internet Forum to Counter Terrorism (GIFCT) to stop the spread of terrorist content online.

One of their works is the Hash Sharing Database. This database can help other members understand what content corresponds to the hash, including content type, terrorist entity that produced the content, and its behavioural elements. This enables its members to securely, effectively, and privately identify and exchange signs of violent extremist and terrorist behaviour.

But AI-powered image & video analysis is not perfect; the machine is not infallible, which is why, at the time being, tech platforms like Meta still require human assistance to help decide which content contains dangerous messages.

Monitoring Social Network Analysis (SNA)

AI-driven social network analysis is another crucial tool for PCVE. Extremist organisations often recruit and radicalise individuals through social networks. AI can help identify the connections and relationships between known extremists and potential recruits. This information is vital for tracking the spread of extremist ideologies and dismantling recruitment networks.

In Indonesia, one of the example platforms that has been using SNA is Drone Emprit. Their system uses AI to monitor and analyse social media, mapping where the information originated, which account is spreading it, their influencers and so on. Even though Drone Emprit is not a PCVE-focused platform, its capability is promising for use in these efforts.

By mapping social networks and analysing communication patterns, AI can highlight individuals at risk of radicalisation or those who play central roles in extremist circles. This allows law enforcement and CVE practitioners to intervene, engage, and offer support to individuals who may be vulnerable to extremist propaganda.

Ethical Considerations and Challenges

With all the sophisticated capabilities that AI can do, it is not omnipotent. AI is a learning entity; the more data inputted into the system, the more they learn. But to pour more data, the ethical, security and privacy dilemma is still in debate. There are concerns regarding the use of AI for surveillance and the possibility of false positives, which could lead to the unjust targeting of innocent people.

Hence, to make artificial intelligence a valuable ally in the battle against violent extremism, its use must be guided by ethical values and individuals' privacy. More studies, policy debates, and recommendations are still required to address these challenges. Multi-agency cooperation from various related stakeholders, such as government, academics, civil society organisations, and tech companies, is essential to maximising the potential of AI in PCVE while still respecting individual privacy.

Conclusion

Artificial intelligence can potentially revolutionise the prevention and countering of violent extremism. AI can significantly contribute to global efforts to combat extremism by enhancing early detection, social networks, and visual analysis. However, the ethical use of AI in this context is paramount, as it ensures that fundamental rights and privacy are respected. As technology advances, it is crucial to harness AI's capabilities for the greater good, making our world safer and more resilient against the threat of violent extremism.

Komentar